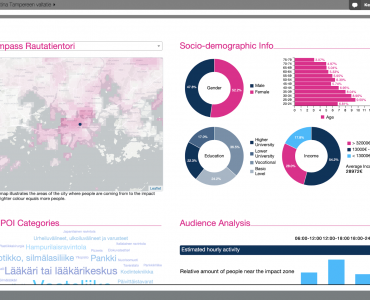

Data & analytics in out-of-home vs digital advertising Audience data is a critical aspect of

What is data fusion? Data fusion is the process of getting data from multiple sources

What’s the role of experimentation in product and service development? You might want to create

What is open data and why it’s important for your business? Open Data is defined

WhereOS launches an innovation platform for hyperconnected world We live in a hyperconnected world where

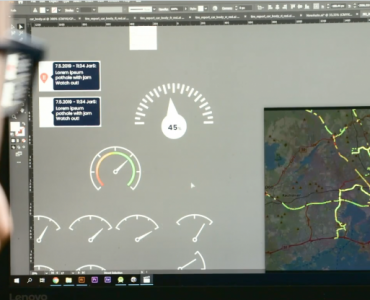

Speeding Up Development and Deployment of Solutions for the Customers of EEE Innovations EEE Innovations